Table of Contents

Basilica (SN39) has announced its first public subnet partnership since overhauling its architecture, teaming up with Gradients (SN56) to power reinforcement learning (RL) evaluation workloads inside the Bittensor ecosystem.

The integration marks a shift in how compute infrastructure is being built and consumed across Bittensor subnets. Rather than competing on pure GPU arbitrage, Basilica is positioning itself as workflow-native infrastructure designed specifically for decentralized AI.

"We made a deliberate choice: stop trying to compete on pure compute arbitrage and start building for the workflows that Bittensor subnets actually need." - Team Basilica

RL Evaluations Move On-Chain Infrastructure Forward

Gradients operates at two levels within Bittensor.

As a user-facing product, it runs AutoML trainings on user data. As a subnet, it organizes open-source tournaments to produce optimal AutoML code, requiring continuous training and evaluation cycles.

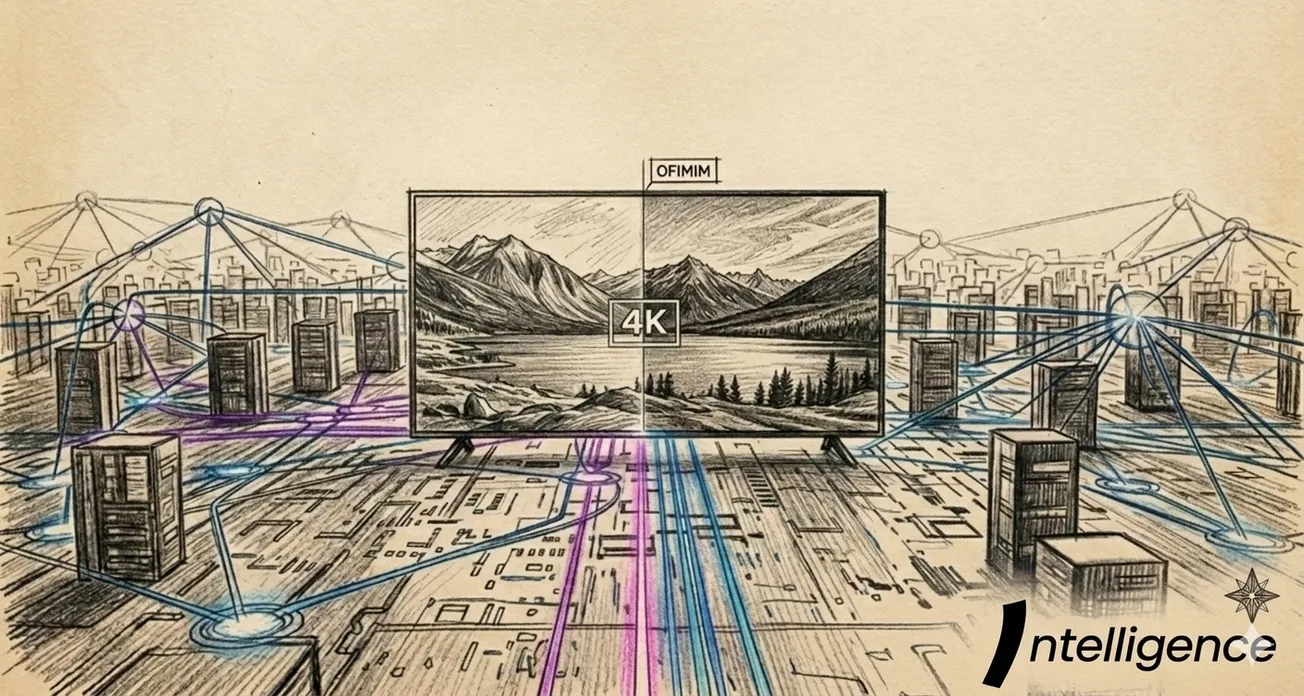

Those RL evaluations now run on Basilica’s infrastructure using serverless compute and container runtimes. The integration enables Gradients to provision GPUs programmatically, execute evaluation jobs, and scale workloads without directly managing cloud infrastructure.

Under the hood, the evaluation pipeline is coordinated through Affine (SN120), which structures RL competitions so that miners compete, validators score performance, and rewards flow toward the most performant models. Gradients executes its RL evaluation workloads on Basilica containers via Affine’s orchestration layer.

The result is a modular stack:

- Gradients: AutoML tournaments and training logic

- Affine: RL competition and reward coordination

- Basilica: Compute provisioning and execution environment

Together, the three subnets form a composable architecture within Bittensor, where the infrastructure, coordination, and modeling layers remain distinct yet interoperable.

From Arbitrage to Workflow-Specific Compute

The partnership comes shortly after Basilica’s architectural relaunch. Instead of competing with hyperscalers on raw pricing, the subnet has focused on infrastructure tailored to Bittensor-native machine learning workloads.

According to the announcement, Gradients required:

- Reliable GPU provisioning

- Predictable baseline pricing

- Containerized environments for experimentation

- Serverless functions for inference

- Infrastructure capable of sustained training and ephemeral inference

Basilica’s updated stack includes GPU and CPU rentals benchmarked against major cloud providers, sandboxed container runtimes, serverless inference functions, and an SDK designed for programmatic access, including agent-native interfaces.

The partnership is structured as a non-zero-sum relationship. Instead of competing for emissions, the two networks are aligning incentives. Gradients gains sustainable compute terms and infrastructure optimized for RL evaluation patterns. Basilica, in turn, gains live production workloads that stress-test and refine its infrastructure.

Basilica indicated that additional subnet partnerships are in progress, with Gradients serving as the first public deployment under its new model. For Gradients specifically, tighter integration into its training pipeline and expanded workload support are already under exploration.