Table of Contents

Video now accounts for roughly 65% of all global internet traffic, according to ITU data from 2022. Every gigabyte costs money to store. Every delivery costs money to move. And the companies charging for both, AWS, Google Cloud, Azure, have no incentive to change.

The result: The industry compromises. Security operators compress surveillance footage until faces blur. Platforms store at a lower resolution to cut costs. Medical systems trade diagnostic detail for file size. The infrastructure running all of this, codecs like H.264 and H.265, has not fundamentally changed in twenty years. They apply the same fixed mathematical rules to every video, regardless of what is in the frame.

The problem is not the codecs. It is the architecture. One provider. One pricing structure. Pricing power sits with whoever controls the compute, and no single company in the supply chain has the incentive to build something different.

That is the problem Vidaio (Subnet 85) is building against.

Three forces make it structural. Storage costs scale linearly with content volume, with no ceiling. Quality degrades at the edges: users far from data centers receive worse video. And pricing power sits entirely with whoever owns the servers. Clients have no real alternative. Switching costs are high, barriers are steep, and every additional hour of video makes the lock-in tighter.

Vidaio is building the alternative. Storage and bandwidth are the largest infrastructure expenses for any video platform. Any reduction in file size cuts both. At scale, the financial impact is material. Vidaio is building toward an 80% reduction.

AI Compression That Adapts to the Video

Vidaio is a decentralized video processing network built on Bittensor. Two core functions: AI-powered video upscaling and AI-driven compression. Both run through a distributed network of miners and validators, not a centralized server stack.

The upscaling product converts low-resolution footage, including standard HD, degraded surveillance video, and archival content, to high resolution using deep learning. Traditional interpolation guesses at missing pixels. Vidaio's models analyze patterns, textures, and edges to reconstruct accurate frames. The output optimizes for perceptual quality: how footage looks to the human eye, not mathematical accuracy alone.

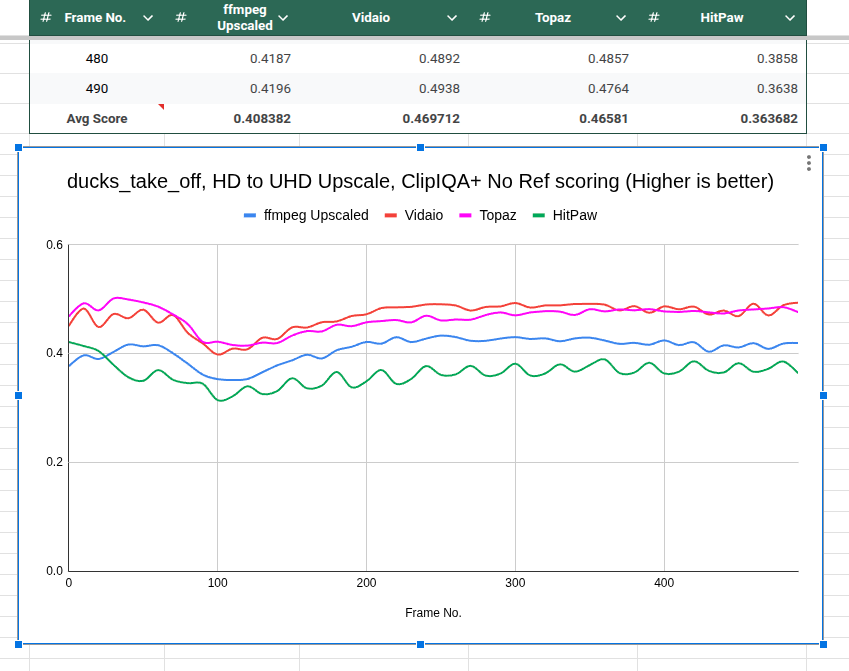

In a published benchmark using Vidaio's base mining code against competitors at standard settings, Vidaio's upscaling model scored 0.4697 on ClipIQA+, outperforming Topaz Video AI, a professional-grade tool widely used by filmmakers and content creators, which scored 0.4658. ClipIQA+ is a no-reference perceptual quality metric scoring how natural and visually accurate enhanced footage looks to a human viewer. Miners on the network are already improving on that base code, pushing scores higher.

The compression product analyzes each video before encoding. The model adapts to static or dynamic scenes, motion density, and visual complexity. H.264 and H.265 apply uniform rules regardless of content. Vidaio's model treats every file differently.

To understand why the 80% target matters: H.264 reduces raw video by 75 to 80% compared to uncompressed footage. H.265 achieves roughly 40 to 50% additional compression on top of that. Vidaio's 80% target is a reduction from the already-compressed source files platforms actually store and deliver, not raw uncompressed video. That is a materially harder benchmark. This target is in active testing. Current results already show improvements over H.264 and H.265 on the same source material.

A media company storing 1 petabyte of already-compressed video could, if Vidaio hits its target, store the same content in 200 terabytes. That is not a cost reduction. That is a balance sheet event.

AWS Hires Engineers. It Cannot Build This.

Vidaio runs on Bittensor because Bittensor solves the core economic problem that centralized providers cannot.

In a centralized model, the provider owns the hardware, sets the price, and captures the margin. As demand grows, so does their leverage.

Bittensor changes the structure entirely. Miners on Subnet 85 compete to produce the best video processing outputs. Validators assess quality using objective, open-source metrics: VMAF for synthetic tasks, PieAPP and ClipIQA+ for organic jobs. Emissions flow to miners producing the highest-quality results. Miners optimize because better performance earns more TAO. The network improves continuously without any central party directing it.

AWS hires engineers. It cannot build a permissionless marketplace that finds and rewards the best AI video models in the world, then keeps doing it without a product roadmap or a hiring budget. Every improvement a miner ships gets stress-tested against every other miner on the network. The output that survives is the output the market values. Centralized providers cannot replicate this.

Hundreds of Billions of Infrastructure Waiting for a Better Solution

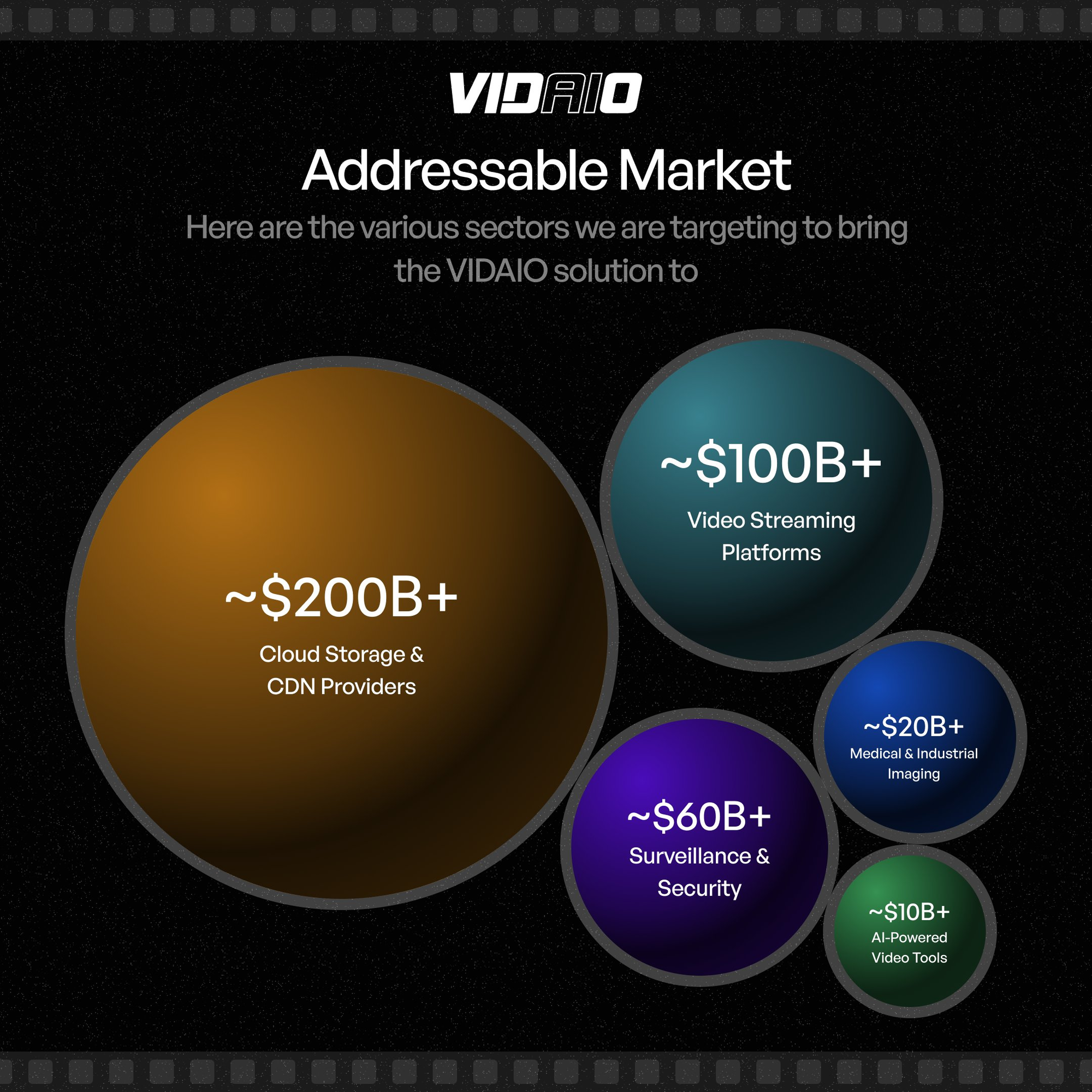

Surveillance and security are a $60 billion market. Security footage is routinely stored at low resolution to manage costs. The consequences are real: blurred faces in criminal investigations, degraded evidence in insurance disputes, footage that cannot answer the questions it was recorded to answer.

Vidaio's upscaling allows operators to store compressed, lower-resolution footage and reconstruct high-quality frames on demand. Cost savings arrive immediately. Forensic and compliance value extends indefinitely.

Cloud storage and CDN providers form a combined addressable market exceeding $140 billion. AWS S3, Google Cloud Storage, and Azure charge for video storage at scale. A compression layer that meaningfully cuts storage requirements is not a feature request. It is a procurement conversation at the board level.

Video streaming platforms represent a market exceeding $100 billion (Grand View Research, 2024). Bandwidth, not storage, is the primary infrastructure expense. Lower file sizes mean lower delivery costs, better playback in low-connectivity environments, and improved quality at the edge. Any durable cost advantage compounds across hundreds of millions of hours of content.

Medical and industrial imaging is a market valued at over $40 billion in 2024, according to Grand View Research and IMARC Group. High-resolution diagnostic scans cannot lose perceptual detail in compression. Vidaio's adaptive compression analyzes scene complexity before encoding and addresses this directly. Files get smaller. Clinical detail is preserved.

The AI-powered video analytics market was valued at $12.71 billion in 2024 and is growing at 19.5% annually, according to Grand View Research. As AI video generation scales, the volume of synthetic content requiring processing, storage, and delivery will exceed anything produced by human creators. The infrastructure layer serving that demand is being built now.

The API Is Where the Subnet Becomes a Business

Vidaio's roadmap extends into live streaming with real-time AI upscaling and compression, adaptive bitrate streaming for variable-connectivity playback, and a RESTful API allowing external platforms to integrate Vidaio's processing directly into their pipelines.

Right now, Vidaio runs as a subnet. Jobs are synthetic or from early adopters. Emissions flow from internal validator scoring. The incentive mechanism runs on internal demand.

The API changes this. A streaming platform integrating Vidaio's compression API does not need to understand Bittensor. It does not need to hold TAO or run a node. It submits a video job, the subnet processes it, and the platform receives the output. Behind the scenes, that organic job gets chunked, queued across miners, and scored by validators, generating real-world demand flowing directly into emission calculations.

This is how Bittensor subnets graduate from infrastructure experiments to revenue-generating products. Organic job volume creates validator demand. Validator demand drives staking. Staking drives alpha token appreciation. The entire economic stack tightens when real-world usage anchors the incentive mechanism.

For investors watching Subnet 85, the API launch is not a product milestone. It is the signal that external demand is about to connect to the internal incentive structure, and the two together are more durable than either alone.

— Vidaio (@vidaio_) February 9, 2026

The Thesis

The video infrastructure incumbents, cloud providers, CDN operators, and centralized processing platforms have the home advantage. The contracts, the distribution, the switching costs. What they do not have is a permissionless network of specialists continuously competing to produce better AI video models, with every improvement stress-tested against every other model in real time.

Vidaio is building that network on Bittensor. Its published upscaling benchmark already outperforms Topaz Video AI. Miners are already pushing past those baseline results. Its compression targets, if achieved at the scale the roadmap describes, would restructure the storage economics of every major video platform on earth.

The incumbents will not lose to a better-funded competitor. They will lose to a system designed to produce better output.

Subnet 85 is building that system.

Disclaimer: This article is for informational purposes only and does not constitute financial, investment, or trading advice. The information provided should not be interpreted as an endorsement of any digital asset, security, or investment strategy. Readers should conduct their own research and consult with a licensed financial professional before making any investment decisions. The publisher and its contributors are not responsible for any losses that may arise from reliance on the information presented.