Table of Contents

Targon has released a new whitepaper in collaboration with Intel detailing a system for running confidential AI workloads across decentralized, untrusted infrastructure.

The paper, titled “Decentralized Compute on Untrusted Hardware Using Intel® TDX and Encrypted CVMs,” introduces a framework for executing sensitive workloads on third-party machines without exposing data, model weights, or execution state.

Beyond the technical release, the collaboration itself marks a notable milestone for both Targon and the broader Bittensor ecosystem. To have a behemoth like Intel working directly with a Bittensor subnet is a moment that would have been hard to imagine even a year ago. This is a serious mark of validation for what's being built in the ecosystem.

A Core Problem: Trusting Untrusted Hardware

As AI demand accelerates, so does the need for secure compute. But today’s infrastructure remains dominated by centralized cloud providers that control both pricing and trust assumptions.

Targon’s approach starts from a different premise: assume hardware providers are untrusted.

The challenge, then, is enabling workloads to run securely even when the underlying machine could be malicious.

The whitepaper frames this clearly, noting that traditional protections like encryption only secure data at rest or in transit, not during execution. That gap is where most risks emerge.

“The primary challenge in building the Targon Virtual Machine was ensuring confidential computation across untrusted operators (hardware providers) without sacrificing performance. We require strong hardware-rooted isolation and portable attestation that could integrate directly into our network’s validation logic. Intel TDX enables secure VM isolation with minimal overhead, while Intel Trust Authority provides verifiable remote attestation that can be embedded into validator workflows. This combination allows us to enforce trust guarantees at the protocol level rather than relying on operator reputation.” - Robert Myers, CEO of Manifold Labs

Confidential Computing As The Foundation

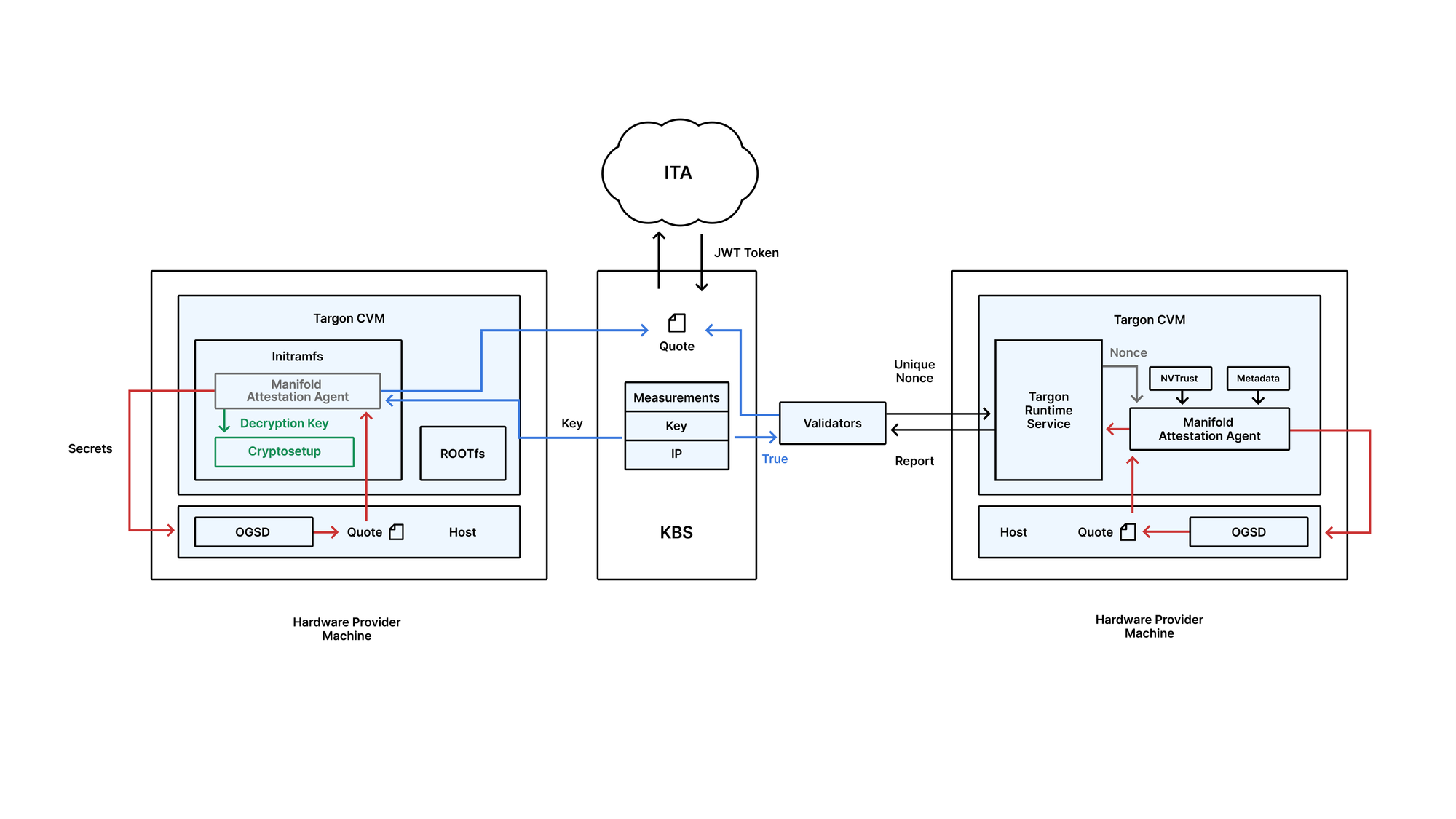

To solve this, Targon leverages Trusted Execution Environments (TEEs), specifically Intel’s Trust Domain Extensions (TDX) and NVIDIA’s confidential compute stack.

These technologies allow workloads to run inside hardware-isolated environments where even the host system cannot access memory or inspect execution.

The system provisions fully encrypted Confidential Virtual Machines (CVMs), which are:

- Encrypted at rest, in transit, and in use

- Cryptographically tied to specific hardware instances

- Only decrypted after successful remote attestation

As described in the paper, each VM is launched with a unique encryption key that is only released if the system proves it is running in a verified, trusted environment.

This creates a zero-trust model where security guarantees come from hardware-level verification rather than operator reputation.

Continuous Attestation And Network-Level Enforcement

Beyond secure boot, the system introduces continuous verification.

Nodes must repeatedly prove they are still operating in a valid confidential state, with validators checking attestation proofs roughly every block interval.

If a node fails verification, it is removed from the network and no longer eligible to run workloads.

This design ensures that trust is not a one-time assumption but an ongoing requirement enforced at the protocol level.

A Decentralized Alternative To Cloud Compute

At a high level, the architecture mirrors the broader Bittensor thesis of turning idle, globally distributed hardware into productive infrastructure.

Targon’s implementation adds a critical layer of verifiable confidentiality.

The system introduces:

- Permissionless hardware participation with economic incentives

- Hardware-level isolation for sensitive workloads

- Transparent, market-driven pricing for compute resources

- Kubernetes-based orchestration across a decentralized network

By combining these elements, the platform aims to offer a viable alternative to traditional cloud providers while maintaining enterprise-grade security guarantees.

Why It Matters For The Bittensor Ecosystem

For Bittensor and adjacent decentralized AI networks, the biggest bottleneck extends beyond compute to trusted compute.

Training data, model weights, and inference pipelines are increasingly valuable assets. Running them on untrusted infrastructure without guarantees has been a major limitation.

Targon’s virtual machine layer directly addresses this constraint.

If successful, it could unlock a new class of workloads for decentralized networks:

- Proprietary model training

- Enterprise AI inference

- Sensitive data processing

- High-value compute markets that previously required centralized clouds

The release of this whitepaper signals that confidential computing is moving from theory to production-ready infrastructure within the decentralized AI stack.

Disclaimer: This article is for informational purposes only and does not constitute financial, investment, or trading advice. The information provided should not be interpreted as an endorsement of any digital asset, security, or investment strategy. Readers should conduct their own research and consult with a licensed financial professional before making any investment decisions. The publisher and its contributors are not responsible for any losses that may arise from reliance on the information presented.